When the Algorithm Gets It Wrong

It really is a matter of life or death.

Case Study

A clinical scenario for nurses

ED triage — 08:42

A 52-year-old woman arrives at the emergency department.

Chief complaint:

“I just feel really off… I’m nauseated and exhausted.”

Vital signs:

- HR: 102

- BP: 148/86

- RR: 20

- SpO₂: 97%

Symptoms reported during triage:

- nausea

- fatigue

- mild chest pressure

- shortness of breath

She looks uncomfortable but not in obvious distress.

The triage nurse documents the symptoms and enters them into the electronic record.

Behind the scenes, the hospital’s AI triage support tool analyzes the chart in real time to estimate the risk of acute coronary syndrome.

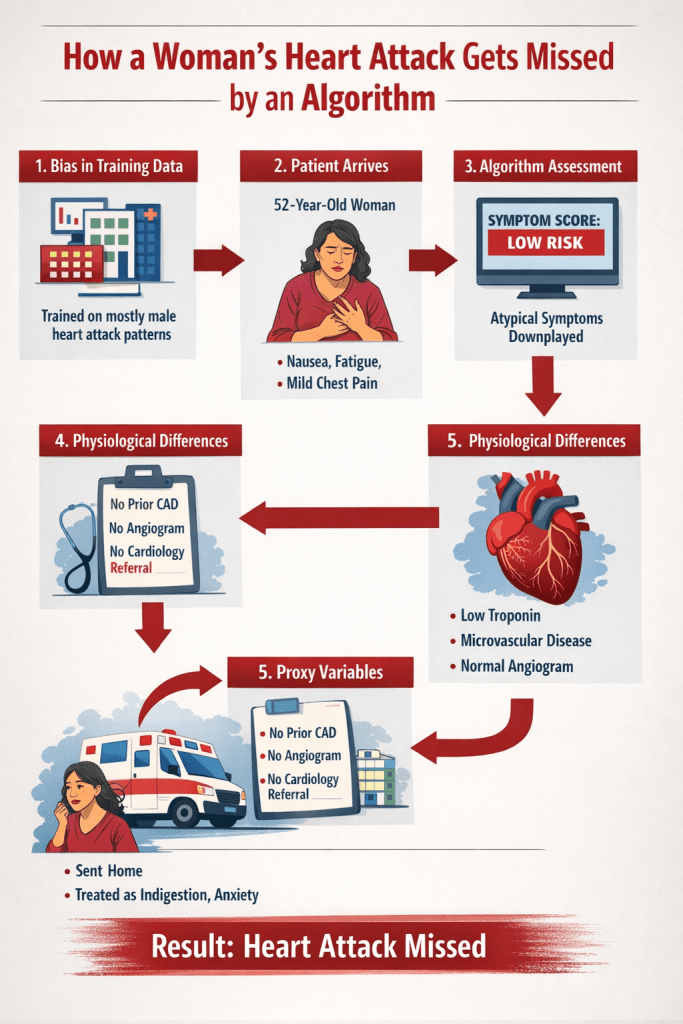

Step 1: How the Algorithm Interprets the Symptoms

The model compares this case to thousands of prior cases in its training dataset.

Historically, heart attack datasets contain many patients with classic male-pattern symptoms, including:

- crushing substernal chest pain

- radiating left arm pain

- clear ST elevation

But this patient’s chart contains different descriptors.

The algorithm flags the symptoms as “atypical.”

Her risk score drops.

Step 2: Subtle Physiologic Differences

Initial labs return.

Troponin is slightly elevated but still near the lower threshold.

Women often have smaller myocardial mass, which can produce lower troponin levels during injury compared to men.

If diagnostic thresholds were calibrated primarily from male data, the model may interpret the lab result as low probability of infarction.

The risk score drops again.

Step 3: The Hidden Variable — Prior Diagnosis

The algorithm checks past medical history.

Findings:

- No prior coronary artery disease

- No previous angiogram

- No cardiology visits

At first glance this appears reassuring.

But this variable contains hidden bias.

Research consistently shows that women are:

- less likely to receive coronary angiography

- less likely to be referred to cardiology

- more likely to have cardiac symptoms attributed to anxiety or gastrointestinal causes

Because the algorithm learned from historical records, it interprets absence of prior cardiac workups as lower risk.

The score drops again.

Step 4: A Different Type of Heart Disease

Meanwhile, the patient’s symptoms are actually being driven by coronary microvascular dysfunction.

Unlike classic plaque rupture, microvascular ischemia:

- may produce minimal ECG changes

- may not show large-vessel obstruction

- can produce more subtle biomarker elevations

These patterns were historically underrepresented in cardiac datasets.

The algorithm was never trained to recognize them well.

Step 5: The Algorithm’s Recommendation

The triage tool outputs a recommendation to the clinician:

Low risk for acute coronary syndrome

Suggested pathway:

- repeat troponin later

- consider GI causes

- possible discharge if stable

But the patient is experiencing a myocardial infarction.

Without careful clinical reassessment, the diagnosis could be delayed.

Where Nursing Assessment Matters

This is where bedside nursing observation becomes critical.

The triage nurse notices that the patient keeps saying:

“This feels different than anything I’ve felt before.”

She also observes:

- increasing diaphoresis

- worsening fatigue

- persistent chest pressure

The nurse escalates the concern to the provider despite the algorithm’s low-risk classification.

Further testing confirms myocardial infarction.

The patient receives treatment.

Summary

AI decision tools are only as good as the data they were trained on.

When historical datasets contain bias, algorithms can reproduce those patterns.

Clinical judgment—and especially nursing assessment—remains essential for catching what algorithms may miss.